Since Linux ISOs are typically unique files, deduplication doesn’t really have any effect ![]()

Oh, so it’s just good for windows? Well still, I also have a local windows plex server (aside from my RPi server).

I’m using Linux ISOs as a replacement for what Plex servers actually ingest ![]() Deduplication works regardless of the platform, but it won’t have any effect if all files are entirely unique (which most, if not all media files are).

Deduplication works regardless of the platform, but it won’t have any effect if all files are entirely unique (which most, if not all media files are).

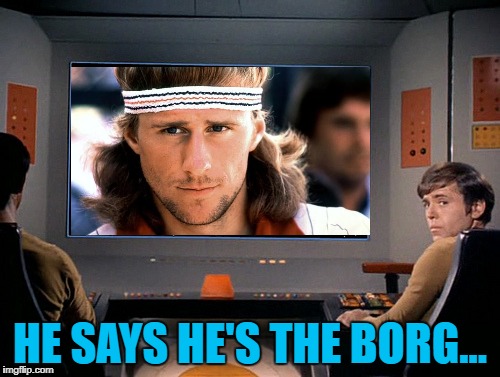

Yep, dedup works on files that may have the same content (the way borgbackup operates allows to dedup within a file too and to dedup chunks pertaining to multiple files); anyway media elements (images, photos… videos) are often pretty unique, and the data they contain are often so unique they can’t be sensibly compressed too (I mean, losslessly compressed with zip/gz/xz/lzop/zstd on top of h264/h265/aac/jpg/whatever)

Ah, fair enough ![]()

A list of backup stuff right here:

I use restic on a number of systems to perform daily backups. Seems to perform quite well.

I use zfs on the server and rsync.net for offsite - block level dedupe is pretty nice

One of the key differences is that incremental backups will always grow over time, as earlier backups are essentially immutable (they’re a snapshot in time that can’t be changed), and new backups just layer changes on top of them. In order to prune files that have been deleted in old backups to shrink the storage space used, you need to perform a fresh full backup then delete the old backups, which is slow. I used to do this with Duplicity - Daily incremental backups, monthly full backups. This also means that in order to restore a backup for a given date, you need the most recent backup along with all incremental changes from the full backup up until the date you want to restore.

Something like Borgbackup that dedupes at a block level is a lot smarter. There’s a whole heap of advantages:

- To restore a backup, you only need the blocks used by that backup, rather than the previous full backup + all the incremental changes up until that point

- When old backups are removed, if any of the blocks it used are no longer required, they can be pruned without having to do a new full backup like you’d need to do with an incremental backup

- You can do incremental backups ‘forever’ without the repo bloating out in size

Thanks for explaining it so thoroughly, @Daniel . I’ll definitely look more into the options I have once I got time ![]()

Big fan of Borg for servers, have it configured with BorgBase to send automatic backups.

On my (Windows) computer I use Arq Backup, it’s not perfect but it works well for what I do. I used to use Duplicati but ran into extremely slow restores and frequent bugs on the web panel so I looked for alternatives and ended up finding Arq.

Do you send ZFS snapshots to rsync.net or just files? I use ZFS heavily and would love somewhere to send incremental snapshots without having to manage my own ZFS server or abstracting them as files manually.

at this point just files - for a couple of reasons

- my backup strategy was implemented before rsync.net offered ZFS snapshots

- one of the servers can’t run ZFS - running the same backup on all servers is handy

- I am only backing up the home directories - not the entire mount

I do however expect to replace that last server and do a re-evaluation sometime this year

I use rsnapshot. It’s basically rsync with deduplication (using hardlinks). I like that it’s simple and works well.

Want to delete a backup? Just go ahead and delete it. The hardlinks have you covered. Individual files can quickly be referenced because they’re just sitting in the folder.

The disadvantage is that that the backup system needs to support hardlinks. So, no storing backups on Dropbox. Also, no encryption.

At BorgBase we sponsor multiple open source projects around Borg. So naturally I’m happy to recommend that and equally happy to see a healthy ecosystem and a major Borg release 1.2 just a few days ago. Would definitely check that out.

I also know and used the rsync+hard links technique for years. Main benefit is that you can directly read your files. Drawbacks are lack of compression, deduplication, encryption, small changes to large files and scalability with many files and versions.

I forgot about compression. That’s a big one.